How I Built a 20-Prompt System to Run a Go-To-Market Operation

14 interviews, 4 layers, and a living document that replaced gut feel with evidence.

I built a system of 20 AI prompts that processes interview transcripts through four layers — extraction, synthesis, action, and tracking — to produce an evidence-based go-to-market strategy. Instead of gut feel, the system maintains a versioned “Current Truth” that updates as new evidence emerges. The architecture is transferable to any operation that runs on conversations.

Last year I ran go-to-market for a German infrastructure company entering the US mid-market. Platform Engineering as a Service — a complex product, a technical buyer, and zero brand awareness in the target market.

The standard playbook would have been: define ICP, write messaging, build a prospect list, run outreach sequences, iterate on response rates. And that's roughly what we tried first. Ten weeks of LinkedIn outreach across two profiles, 700+ connection requests, eight messaging sequences.

Zero meetings booked.

(I wrote about the outreach diagnosis separately.)

But the outreach failure wasn't the interesting part. The interesting part was what we built to learn from it — and from every conversation we'd ever had with customers, prospects, and the internal team.

A system of 20 interconnected prompts that turned raw transcripts into strategy.

Why Gut Feel Fails in Go-To-Market Strategy

We had 14 interview transcripts. Seven with customers and prospects. Seven with internal team members — the CEO, VP Product, account engineers, co-founder.

In a traditional consulting engagement, someone reads all 14 transcripts, highlights themes, and writes a strategy document. It takes weeks. The themes depend on what the reader notices. The strategy depends on what the reader believes.

The problem with that approach: people are bad at synthesising large volumes of qualitative data objectively. We notice what confirms what we already think. We miss patterns that don't match our existing mental model. And we can't easily track how our beliefs change over time.

I wanted a system that would extract signal from noise consistently, synthesise across all interviews without bias, track evidence for and against every hypothesis, and update its own conclusions when the evidence changed.

“Meta-prompting gave me the method to build it.”

The Prompt-Based GTM Architecture

Every prompt in the system was built using the same approach from Meta-Prompting 101 — expert instructions embedded, context gathering phase, structured output. But instead of standalone prompts, these were designed as a pipeline. Each layer's output feeds the next layer's input.

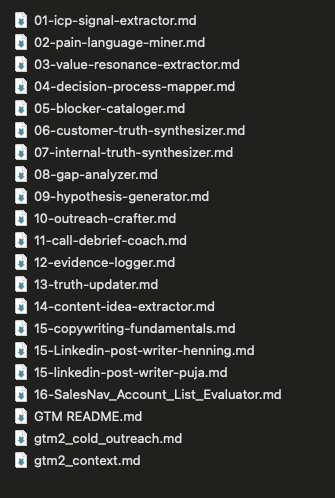

Four layers. Thirteen core prompts. Plus LinkedIn post writers, copywriting fundamentals, and a Sales Navigator account evaluator — 20 files total in the project. This prompt-based GTM architecture was designed to be evidence-based from day one: every layer produces structured output that the next layer can build on.

Layer 1: Extracting Customer Signal From Interviews

The extraction layer is where most of the work happens. For each of the 14 transcripts, I ran five prompts.

The ICP Signal Extractor pulls out who they are — company size, tech stack, team structure, buying signals. Not just demographics but fit indicators: do they have the problem we solve, do they know they have it, are they in a position to buy?

The Pain Language Miner is the most valuable prompt in the system. It pulls verbatim quotes — the exact words customers use to describe their problems, grouped by theme and persona. Not my interpretation of their pain. Their words. This matters because messaging that uses the customer's language outperforms messaging that uses your language, every time.

The Value Resonance Extractor tracks what landed during the conversation. Which benefits excited them. Which fell flat. Which confused them. This is the feedback loop most companies never build — knowing not just what you said, but how it was received.

The Decision Process Mapper captures how they buy. Who's involved, what criteria matter, what the timeline looks like, where budget comes from. For infrastructure decisions at our price point ($200–300K/year), this was often a 6–12 month process with 3–5 stakeholders.

The Blocker Cataloger collects everything that stops deals. Not just objections — those are surface level. The real blockers: compliance requirements we couldn't meet yet, timezone coverage gaps, the “graduation risk” where companies with 12+ platform engineers decide to bring it in-house.

Five prompts, 14 transcripts. 70 extraction runs. Each one producing structured, comparable output.

Layer 2: Synthesising Customer Truth vs. Internal Truth

Once all extractions were complete, three synthesis prompts aggregated everything.

The Customer Truth Synthesizer took the seven external extractions and produced a unified view: who our customer actually is, what they actually care about, how they actually buy, and what actually stops them.

“Customers didn't care about Kubernetes. They cared about not having to care about Kubernetes.”

The winning frame wasn't “we manage your clusters” — it was “we're essentially a DevOps team for your infrastructure.” Multiple customers used almost identical language independently.

The Internal Truth Synthesizer did the same for the seven internal interviews. What the company believed about itself, its capabilities, its positioning. The most honest finding: “Ask 10 people what we do, get 12 answers.” Identity confusion was the upstream problem.

The Gap Analyzer compared both. Where did internal beliefs match market reality? Where did they diverge? Overall alignment score: about 5 out of 10. The company's self-image and the market's perception were significantly misaligned in several areas.

That gap analysis became the most important document in the entire project. It replaced opinion with evidence. When the CEO and I discussed strategy, we weren't debating beliefs — we were looking at where the data said we were right and where it said we were wrong.

Layer 3: Hypothesis-Driven Outreach

The action layer turned analysis into outreach and learning.

The Hypothesis Generator created testable statements from the synthesis. Not “we think CTOs are our buyer” but “CTOs at mid-market SaaS companies with 1–5 platform engineers will respond to outreach framed around reducing infrastructure hiring costs — test with 20 targeted messages, success = 3+ replies.” Each hypothesis had a confidence score and kill criteria.

The Outreach Crafter built messages designed to test specific hypotheses. Not generic cold emails — each message was engineered to validate or invalidate a specific belief about what resonates with a specific persona.

The Call Debrief Coach ran after every conversation. Structured extraction: what did we learn, what hypotheses does this support or challenge, what should we test next. This was the habit-formation prompt — it turned every call into a data point instead of a memory.

Layer 4: Evidence Tracking and the Current Truth

The tracking layer maintained the system's memory.

The Evidence Logger kept a running scorecard on every active hypothesis. After each conversation or outreach batch, new evidence got logged with source attribution. Over time, hypotheses would accumulate enough evidence to be validated, invalidated, or refined.

The Truth Updater was the final prompt. When enough evidence had shifted, it produced a new version of the “Current Truth” — the single document that drove all GTM decisions. Versioned with a changelog. You could see what the team believed in January versus March, and exactly which evidence caused each update.

“Not permanent truth. Not gut feel dressed up as strategy. Current truth — what the evidence says right now, explicitly versioned, explicitly updateable.”

The Weekly Rhythm

The system ran on a cadence:

This rhythm turned GTM from a campaign into a learning system. Every week, the system knew more than it did the week before. Every conversation fed back into the model. The gap between what we believed and what was true narrowed consistently.

What Evidence-Based GTM Produced

From the 14 interviews and 10 weeks of outreach, the system surfaced findings that traditional analysis would have missed or taken much longer to reach.

The cold outreach diagnosis was evidence-based, not opinion-based. Connection requests from a no-name account generated decent acceptance rates (18%) but zero meetings. The root cause wasn't messaging — it was awareness. B2B buyers of high-ACV infrastructure services need significant exposure before engaging. Cold DMs try to shortcut trust-building to zero. At our price point and awareness level, it didn't work.

The ICP refined itself through evidence. The original target was broad. The extraction prompts revealed that the sweet spot was narrower: companies with 30–50 engineers total, 1–5 person platform teams, AWS-focused, with a “buy vs. build” culture. Companies above a certain platform team size graduated to self-managed. Retail was a dead end.

The messaging shifted from features to identity. The most resonant frame — validated across multiple independent interviews — was “You're not an infrastructure company. You're a SaaS company.” That single line, surfaced by the Pain Language Miner across separate transcripts, became the centrepiece of all positioning.

Why Prompt-Based GTM Transfers to Any Conversation-Driven Operation

The specific findings are specific to this company. But the prompt-based GTM system is transferable.

Any operation that runs on conversations — sales, consulting, customer success, recruiting — can use evidence-based go-to-market the same way. Record the conversations. Extract signal with consistent prompts. Synthesise across all data points. Generate testable hypotheses. Track evidence. Update beliefs.

The meta-prompting method from Article 1 is how you build each individual prompt. The skills framework from Article 3 is how you make them reusable and versionable. The project architecture from Article 2 is how you give the whole system persistent context.

This GTM system is what happens when all three come together on a real project.

The full system architecture — prompt directory, workflow guide, layer descriptions — is available as a download. Adapt it to your operation.

What I'd Do Differently

Start the evidence system earlier. We ran outreach for weeks before building the extraction and tracking layers. The learning from those early conversations was partially lost — captured in memory and notes, not in structured extractions.

Build the Internal Truth first. We started with customers, which felt natural. But the gap between internal beliefs and market reality turned out to be the most important finding. If I'd synthesised the internal interviews first, we would have surfaced the identity confusion earlier and adjusted positioning sooner.

And one more: version the prompts themselves, not just the outputs. My early extraction prompts were rougher than the later ones. As I refined them, earlier extractions became inconsistent with later ones. Next time, I'd lock a prompt version before running a full batch, then upgrade and re-run if needed.

The biggest change: I'd use Claude Skills instead of uploading .md files into the project. Skills live outside any single project — you can reference them per chat, combine them on the fly, and reuse them across clients. The prompt directory below is exactly what a skill library looks like:

The full prompt directory. Each .md file is a reusable skill.

Danny builds AI-powered operating systems for NZ businesses. The GTM prompt system described here is one expression of a broader approach: structured AI that learns from every conversation.

Want a system like this for your operation?

I build AI-powered GTM, sales, and strategy systems from your conversations and expertise. Not templates — systems built from your data.

Start a conversation →More field notes

The Most Expensive Thing in Your Business Is What You Haven’t Written Down

How one or two conversations become a complete digital presence — no forms, no briefs, no agencies.

ReadShift Down, Not Left

Why platform engineering replaces shift left as the structural fix for developer burnout.

ReadI Built a Scaling Dashboard for a $200K/Month UGC Agency

Interactive economics dashboard + scaling roadmap for a creative agency.

ReadWhen Cold Outreach Hits a Wall

How content-led GTM replaced cold outreach for a B2B platform engineering company.

ReadWhy I’m Betting on Vercel’s AI Cloud

$20/month unlimited sites and what it means for small businesses.

ReadMeta-Prompting 101

The method behind AI systems that sound like you.

Read